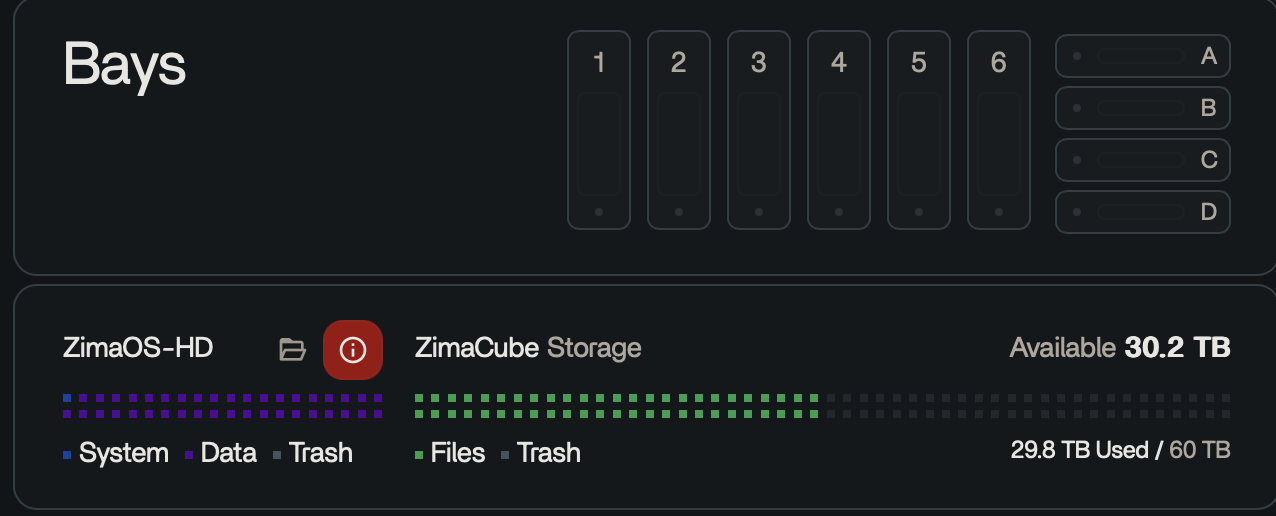

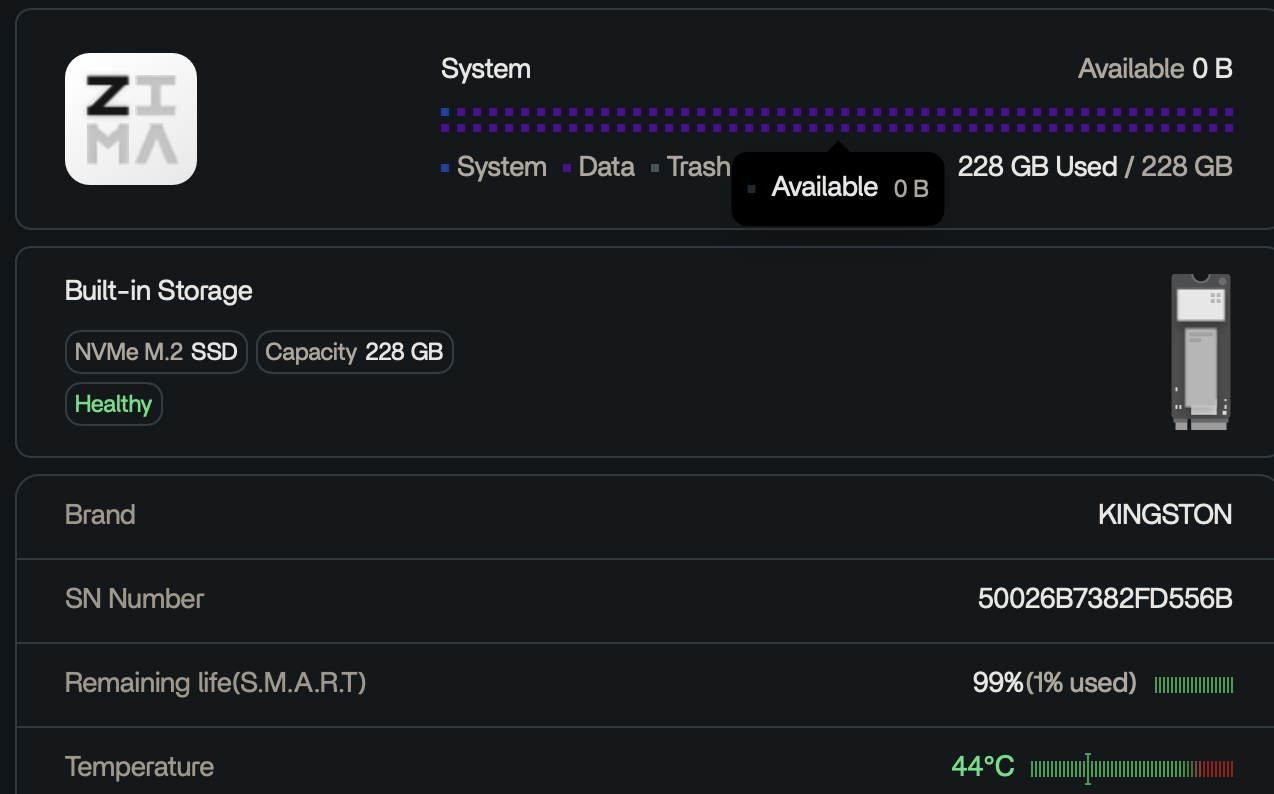

After running Resilio sync on 30TB of data, I think the indexing files have taken up a lot of space and filled up the ZimaOS partition that came with the Cube. Unfortunately this has caused some glitches/corruptions. The NAS functions are all working fine though (SMB, Finder, etc is all good).

I’ve manually deleted some files through Finder, but either the space didn’t clear up or it was quickly filled.

I’ve restarted several times but it’s not resolving the situation.

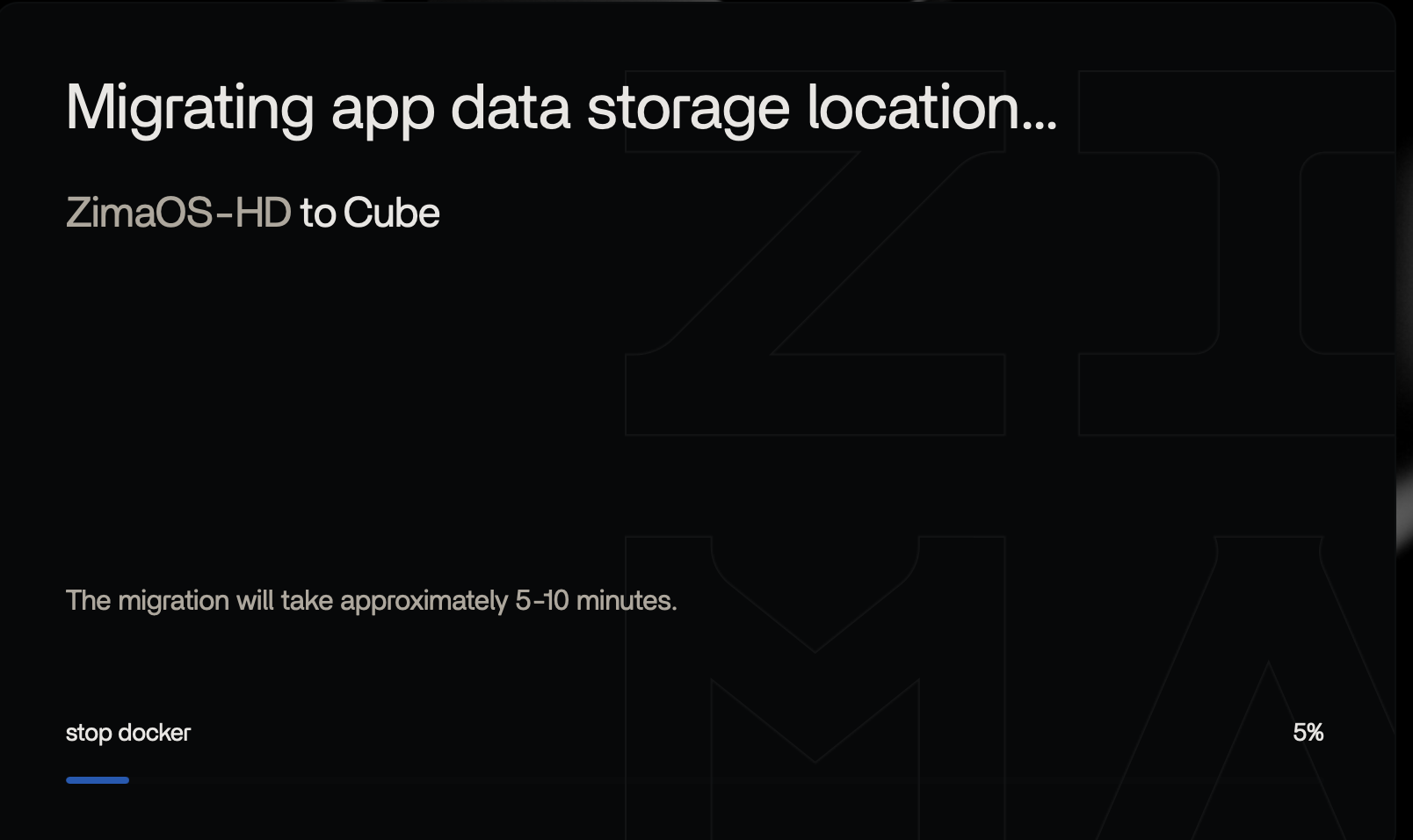

I did get Migration of data files to begin, but it’s stuck on 5% for two hours:

Whenever the dashboard page logs me out, I can log in and view the normal dashboard where there’s a warning about the ZimaOS partition.

But at this point the Migration section is either blank with no options available, or shows 14GB of App Data available to move, which means the original migration never worked.

I’m not sure how to clear up space from the ZimaOS partition? Or how to “reset” it or reinstall ZimaOS? What’s the safest way to fix this without risking the RAID database? My RAID etc is all working normally. (Which is funny after the disconnect/crash issues I had the last few days.)

Update:

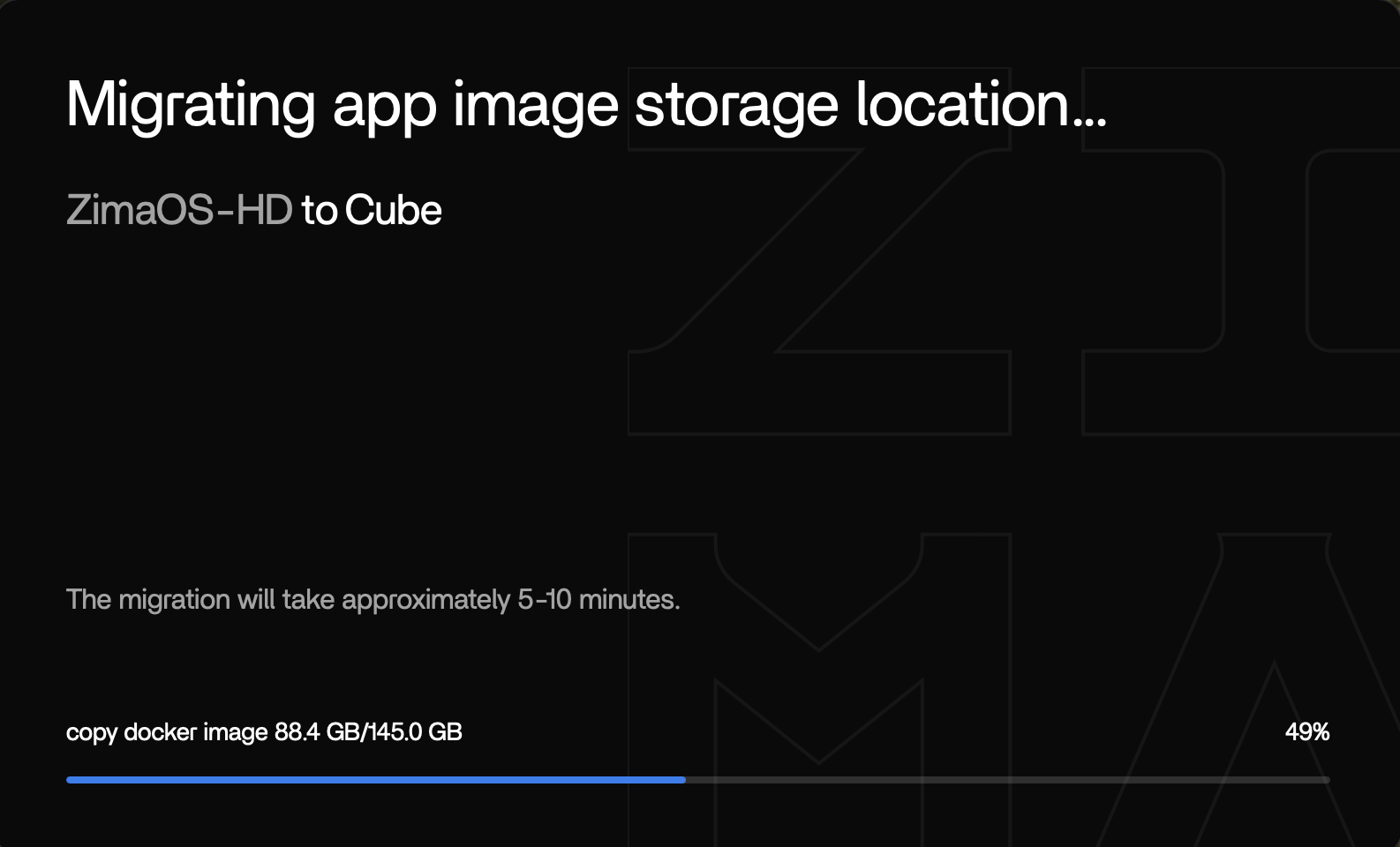

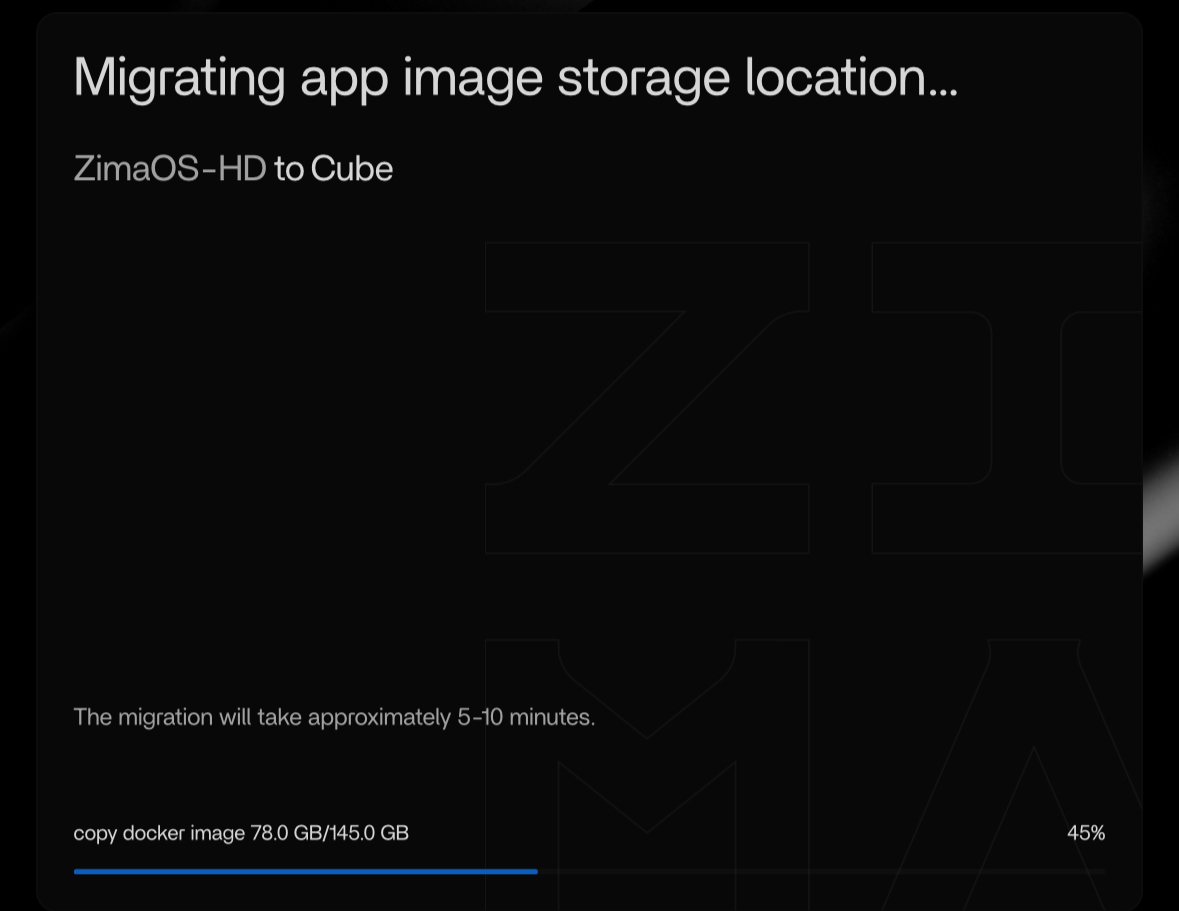

The second migration option showed the GB amount and I began the migration.

At first it was progressing, but now it’s stuck at 45% for an hour…

I will let it sit overnight and see what happens.

Update 2:

Overnight it went up to 49%… I guess I’ll just leave it alone and see what happens.