does anybody have the same problem? can somebody help me please?

Yeah I’ve seen this one before, it’s a bit confusing at first.

Since your GPU is already working with Ollama and OpenWebUI, that basically rules out ZimaOS, drivers, and the GPU dock. So you’re not dealing with a hardware or passthrough problem.

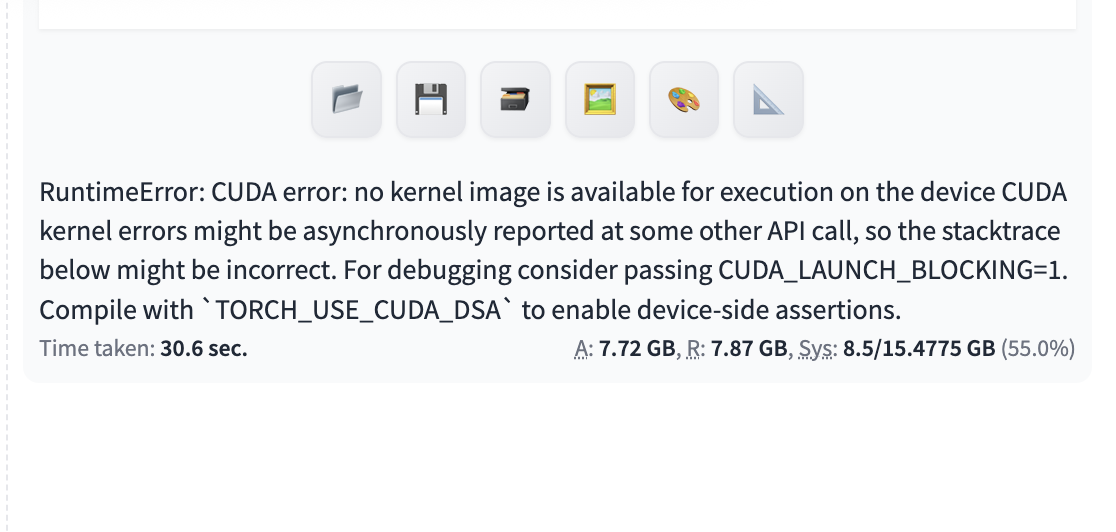

That CUDA error usually comes from the Stable Diffusion side using an older PyTorch/CUDA build that doesn’t support the newer GPU architecture yet. In simple terms, the container can “see” the GPU, but doesn’t have the right compiled kernels to actually use it.

So nothing you’re doing wrong here, it’s just the image being a bit behind.

If you can share which Stable Diffusion app or Docker image you’re running, we can narrow it down pretty quickly and point you to one that works with newer cards

Thanks. That means I should wait until Stable Diffusion gets an update, right?